The COVID-19 pandemic motivated simulation educators to attempt various forms of distance simulation in order to maintain physical distancing and to rapidly deliver training and ensure systems preparedness. However, the perceived psychological safety in distance simulation remains largely unknown. A psychologically unsafe environment can negatively impact team dynamics and learning outcomes; therefore, it merits careful consideration with the adoption of any new learning modality.

Between October 2020 and April 2021, 11 rural and remote hospitals in Alberta, Canada, were enrolled by convenience sampling in in-person-facilitated simulation (IPFS) (n = 82 participants) or remotely facilitated simulation (RFS) (n = 66 participants). Each interprofessional team was invited to attend two COVID-19-protected intubation simulation sessions. An abbreviated Edmondson Psychological Safety instrument compared pooled self-reported pre- and post-psychological safety scores of participants in both arms (n = 148 total participants). Secondary analysis included site champions’ self-matched pre- and post-complete Edmondson Psychological Safety instrument scores.

There was no statistically significant difference between RFS and IPFS total scores on the abbreviated instrument at baseline (p = 0.52; Vargha and Delaney’s A [VD.A] = 0.53) or following simulations sessions (p = 0.36, VD.A = 0.54). There was a statistically significant increase in total scores on the complete instrument following simulation sessions for both RFS and IPFS site champions (p = 0.03, matched-pairs rank biserial correlation coefficient [rrb] = 0.69).

Psychological safety can be established and maintained with RFS. Furthermore, in this study, RFS was shown to be comparable to IPFS in improving psychological safety among rural and remote interdisciplinary teams, providing simulation educators another modality for reaching any site or team.

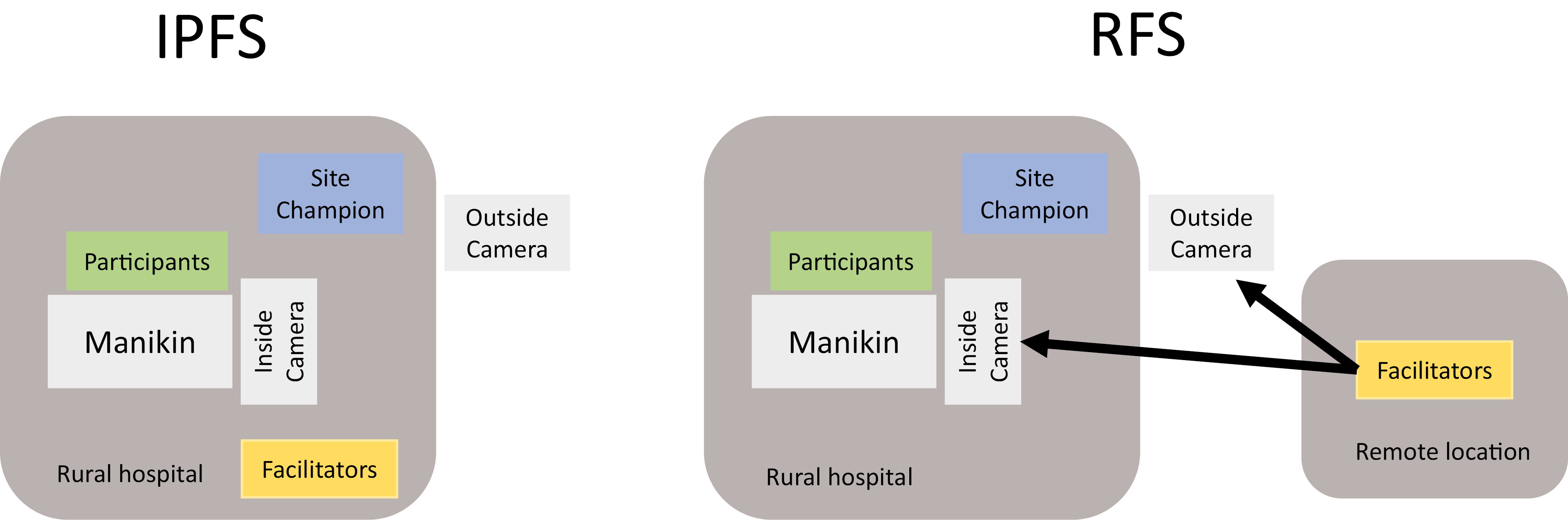

Simulation-based education (SBE) witnessed a rapid adoption of distance simulation as a result of the physical distancing requirements and travel restrictions brought on by the COVID-19 pandemic [1,2]. One distance simulation technique involves in situ teams that are facilitated by a remote facilitator, which we have termed virtually or remotely facilitated simulation (RFS) [3–7]. In contrast to standard in-person-facilitated simulation (IPFS), RFS facilitators interact remotely with simulation participants through synchronous internet-based video-conferencing software. RFS simulation participants are able to practice in situ in their clinical environment while supported by remote facilitators. RFS differs from other forms of distance simulation where both participants and facilitators are interacting via an online communication platform, remote participants are watching a broadcasted simulation, participants and facilitators are interacting asynchronously or where participants are learning through screen-based simulation [8–10]. RFS affords the use of high- or low-technology simulators while enabling access to facilitators and content experts from many locations [11–13].

Previous studies have suggested that high-quality feedback is more important than the method of debriefing [14,15]. However, little is known regarding the comparative efficacy of in-person and remote facilitation. One perceived gap in the understanding of RFS is the participant’s psychological safety of the online platform, which has yet to be definitively supported or refuted in the literature [16–18]. Psychological safety creates a shared belief amongst participants that the simulation experience is a safe environment to practice interpersonal risk-taking without threat of embarrassment, rejection or punishment [19,20]. The simulated environment that fosters psychological safety improves reflective practice while encouraging speaking up, asking for help and admitting error, allowing the application of self-corrective behaviours in practice and improvement of professional skills [19,21–23]. Psychological safety is felt by the participant and established, maintained and regained if lost, by the facilitator. Psychological safety can be measured by self-reported participant surveys such as the Edmondson Psychological Safety instrument [19,24]. A recent narrative review on psychological safety in prelicensure nursing education [25] supports the validity and reliability that the Edmondson model constructs within simulation education. The application of this valid and reliable instrument for the purpose of this study is important as it establishes if RFS is as effective as IPFS in improving psychological safety in the simulated learning environment, as the virtual environment could be less effective than IPFS for learners who are not willing to engage with remote facilitators, or if the online platform disincentivizes participation altogether [16].

The aim of this study is to compare RFS with standard IPFS in establishing and maintaining participant psychological safety through a two-arm controlled experimental design. This question helps to inform the use of RFS for low-resource, geographically isolated and cost-conscious situations to deliver simulation for learning and systems improvement [26].

A total of 11 rural or remote hospitals in Alberta, Canada, were enrolled by convenience sampling in an experimental controlled trial. Six hospitals were enrolled in the standard IPFS arm, and five hospitals were enrolled in the RFS arm. Hospitals located greater than a 2-hour drive away from the nearest facilitator were automatically offered RFS with the ability to switch to IPFS if requested. Of the 11 hospitals enrolled, none requested to switch arms during the trial. Local institutional approval was obtained prior to enrolling each hospital in addition to obtaining approval from the Health Research Ethics Board of Alberta (HREBA.CHC-20-0057).

Rural and remote hospitals’ participants were recruited through a rural Alberta nursing educator e-mail list. Interested rural educators acted as the local site champion and further recruited local participants through a combination of methods, including e-mails, physical sign-up sheets, posters and snowball sampling. Site champions were also tasked with technical and equipment set-up of the in situ simulation prior to the event with remote guidance from facilitators. They were oriented to their role as a technical assistant during the simulation and co-facilitator during the debriefing. Hospital selection inclusion criteria consisted of any hospital within Alberta with fewer than 20,000 outpatient visits per year, requirement of an interfacility transfer for intensive care unit care and availability of a local site champion to coordinate the simulation sessions. Exclusion criteria consisted of any hospital without 24-hour emergency department coverage, or any hospital where running a simulation session would compromise patient care [27]. Participants included any frontline healthcare provider from any health profession, including physicians, nurses, respiratory therapists, paramedics, ward clerks, healthcare aids, trainees and students. Site champions were clinical nurse educators, nurses or physicians.

Each hospital was offered to invite their staff to take part in at least one of two simulation sessions of the same in situ COVID-19-protected intubation scenario which was facilitated either in-person or remotely [3] (Figure 1). Participants, site champion and the manikin remained in situ at the rural hospital in all sessions. Facilitators provided the pre-briefing, simulation and debrief in person for the IPFS arm and remotely for the RFS arm. Zoom Video Communications™ (https://zoom.us/) was used for facilitation in the RFS arm and for video recording in both arms.

An abbreviated Edmondson Psychological Safety instrument (Appendix A) was administered via QR code to all participants who could anonymously answer the questionnaire online pre- and post-intervention to measure the effect of IPFS and RFS on participant psychological safety. The participants were eligible to attend one or both simulation sessions at their hospital, although fewer than 5% of participants attended both. The facilitators coordinated the logistics of the simulations including the date, time, required technology and simulation equipment in advance with the site champion. All IPFS and RFS facilitators were experienced in the rural and remote context, had prior distance simulation facilitation experience, received the same standardized facilitation skills training on simulation and debriefing, and attended a 2-hour synchronous experiential training webinar in establishing psychological safety in SBE.

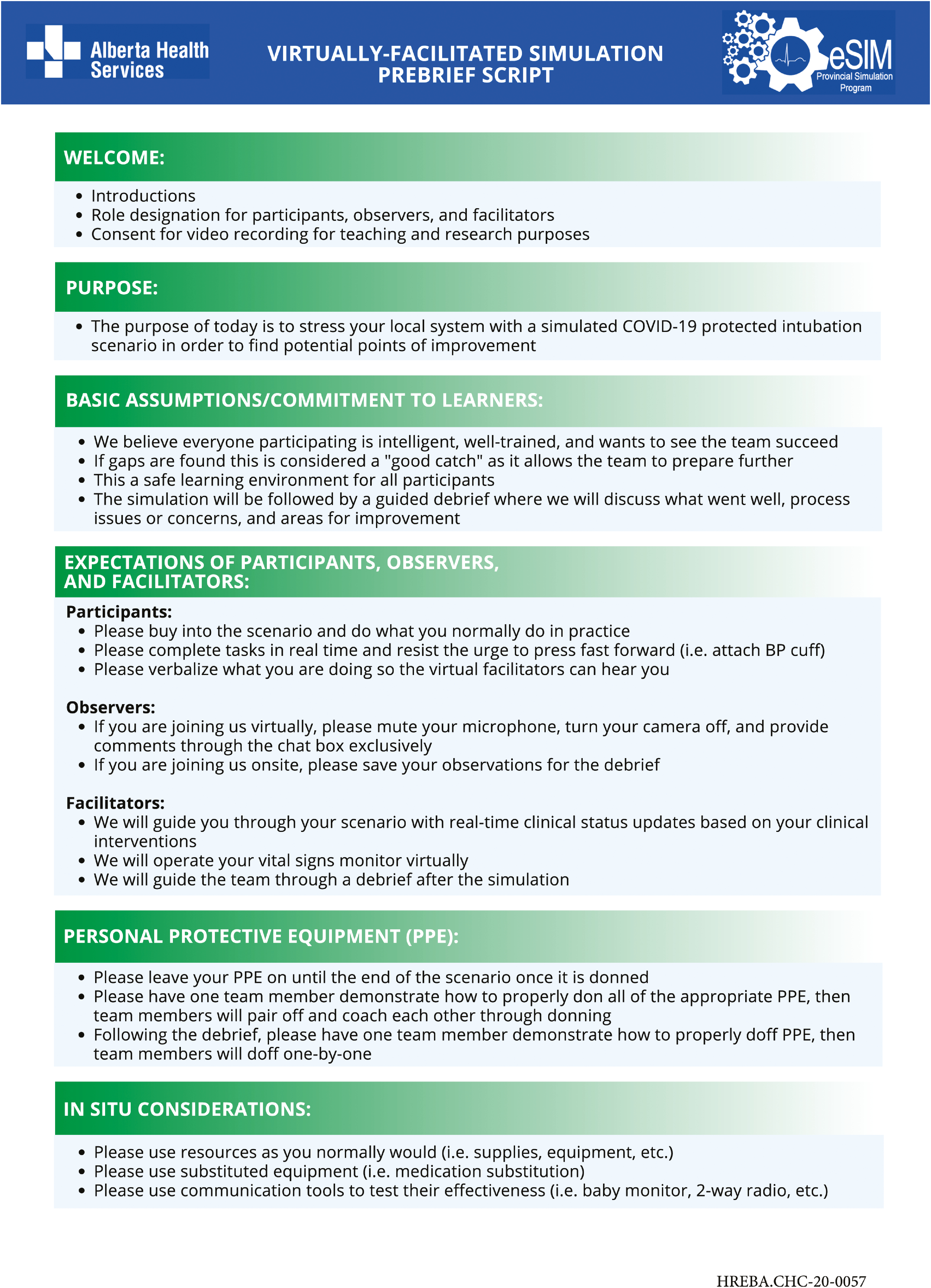

Each simulation session (IPFS or RFS) was completed within 2 hours including a 30-minute technology set-up period. For each session, the facilitators followed a standardized protocol: 1) introduced the study, 2) administered the pre-intervention abbreviated Edmondson, 3) read the scripted pre-briefing (Appendix B), 4) guided a personal protective equipment (PPE) donning exercise, 5) facilitated the simulation scenario, 6) guided a PPE doffing exercise, 7) facilitated the debriefing and 8) administered the post-intervention abbreviated Edmondson Psychological Safety instrument.

The study introduction and pre-briefing were scripted for standardization and allowed only minor changes in word choice or speaking style [20]. The facilitated debriefing followed the Promoting Excellence and Reflective Learning in Simulation (PEARLS) framework, a well-adopted debriefing framework that was employed by all facilitators [28]. The PEARLS framework includes a participant reaction phase to allow learners to share perspectives, description phase to summarize the scenario, analysis phase to identify and close performance and knowledge gaps, and summary phase to allow participant reflection on the learning experience.

The measurement of the primary outcome variables was the participants’ perceived psychological safety rating both pre- and post-simulations, as measured by the sum score of six self-reported items of psychological safety at each time point. These questions were taken from the first construct of team learning climate of the abbreviated Edmondson Psychological Safety instrument following intervention by either IPFS or RFS. The secondary outcome variable was the change in site champion self-reported rating on the complete Edmondson Psychological Safety instrument (Appendix C) following the completion of the second scenario. The complete Edmondson Psychological Safety instrument was not administered to the participants due to the research team selecting the first seven questions as being most relevant and due to time constraints limiting full survey completion.

The pre- and post-intervention data were pooled into IPFS and RFS groups for between-group analysis and were not matched by individual participants. Prior to analysis, the Likert-scale items on both the abbreviated and complete Edmondson Psychological Safety survey were coded as never = 0, sometimes = 1 and always = 2, with negatively worded items appropriately reverse-coded prior to creating sum scores as a measure of total psychological safety rating for each instrument.

Due to normality violations, differences between IPFS and RFS groups for the abbreviated Edmonson Psychological Safety instrument were assessed both pre- and post-intervention using a two-sample Wilcoxon rank-sum test, a non-parametric alternative of an independent t-test. Post-intervention, Wilcoxon rank-sum tests were again utilized to determine if the IPFS and RFS groups differed in their responses to each of the 6 abbreviated Edmondson Psychological Safety instrument questions. Vargha and Delaney’s A (VD.A) was calculated for all between-group comparisons, as a recommended effect size for Wilcoxon rank-sum tests [29].

Prior to exploring the abovementioned between-group differences, the Scheirer–Ray–Hare test, a non-parametric alternative to a factorial analysis of variance (ANOVA), was used to examine if an interaction effect existed between timing of the simulation (pre/post) to the type of simulation (RFS/IPFS) on the total psychological safety score of the abbreviated Edmondson Psychological Safety instrument. Partial Eta Square effect sizes were also calculated.

The self-reported data from the site champions for the complete Edmondson Psychological Safety instrument (pre/post) were analyzed using a two-sample signed-rank test using the Pratt method to handle zero differences [30], which is a non-parametric alternative to a paired sample t-test. The magnitude of this effect was found by calculating the matched-pairs rank biserial correlation coefficient (rrb ), which is a recommended effect size statistic in such settings [31].

All analyses were conducted in R Version 4.1.2 [32] with statistical significance defined as p < 0.05.

Potential harms were mitigated through the use of rigorous inclusion and exclusion criteria and informed consent process. Specifically, patient harm was avoided by excluding hospitals where simulation sessions would negatively impact patient care either due to patient care priorities, or critical shortage of staffing. Unintended consequences with the use of video (e.g. use for performance reviews or evaluation) were mitigated through secure video storage on a password-protected drive which was only accessible to the research team in compliance with data research requirements by the research ethics board. While the video recordings show identifiable participants, all data from the study have been aggregated such that any linkage between type of professional or other demographic information has been anonymized.

In total, 148 participants were enrolled throughout the 11 rural and remote hospitals through 20 simulation sessions between November 2020 and April 2021. Of the 148 participants, approximately, 55% (n = 82) were included in the IPFS arm and 45% (n = 66) in the RFS arm.

Baseline demographic characteristics of the site champions and participants in both IPFS and RFS arms were collected as part of the pre-session surveys (Table 1). Participants were most commonly nurses (53.4%) but also included physicians (17.6%), respiratory therapists (2.7%), paramedics (2.7%) and trainees (23.0%). IPFS teams were more likely to involve trainees (IPFS = 31.7%; RFS = 12.1%) and were more likely to have fewer years in practice compared with RFS respondents. The two IPFS groups with the greatest trainee involvement were geographically proximate to academic centres. Despite the fewer years in practice in IPFS respondents, RFS respondents were less likely to have prior simulation experience. Site champions were exclusively nurse educators in the RFS arm and either a nurse educator or physician in the IPFS arm. The majority of site champions reported 10–20 years of clinical experience with IPFS site champions having more prior simulation experience than their RFS counterparts.

| Site Champion | ||

|---|---|---|

| Nurse Physician |

IPFS (n = 6) | RFS (n = 5) |

| 3 3 |

5 0 |

|

| Time in Practice (years, %) < 5 5–9 10–20 > 20 |

1 1 4 0 |

0 0 4 1 |

| Prior Simulations (number, %) None < 5 5–10 > 10 |

0 0 1 5 |

1 1 1 2 |

| Participant | ||

| Nurse Physician Respiratory Therapist Diagnostic Imaging / Laboratory Paramedic Resident Physician Medical Student Nursing Student |

IPFS (n = 82) | RFS (n = 66) |

| 34 15 3 0 4 12 4 10 |

45 11 1 1 0 2 5 1 |

|

| Time in Practice (years, %) < 5 5–9 10–20 > 20 |

51 14 12 5 |

20 15 13 18 |

| Prior Simulations (number, %) None < 5 5–10 > 10 |

12 35 17 18 |

20 23 12 11 |

IPFS – in-person-facilitated simulation, RFS – remotely facilitated simulation

Prior to exploring between-group differences, the Scheirer–Ray–Hare test, a non-parametric alternative to a factorial ANOVA, indicated that no significant interaction effect existed between the timing of the simulation (pre/post) and the type of simulation (RFS/IPFS) on the total psychological safety score of the abbreviated Edmondson Psychological Safety instrument (p = 0.81, η p2 < 0.001), with the partial eta square effect size indicating negligible to no effect.

Analysis of between-group difference (Wilcoxon rank-sum test) at baseline, i.e. pre-intervention revealed that there was no statistically significant difference between RFS (Mean = 9.32, SD = 2.15) and IPFS (Mean = 9.59, SD = 1.94) arms on the total score of the self-reported abbreviated Edmondson Psychology Safety instrument (p = 0.52, VD.A = 0.53). Post-intervention, there was still no statistically significant difference between RFS (Mean = 10.36, SD = 1.72) and IPFS (Mean = 10.65, standard deviation [SD] = 1.57) arms (p = 0.36, VD.A = 0.54). Based upon effect sizes, there was only a non-existent to negligible effect of simulation type in establishing or maintaining psychology safety.

Table 2 illustrates the post-intervention responses on each of the 6 questions team learning climate subscale questions that comprise the abbreviated Edmondson Psychology Safety instrument. Responses to five out of the six questions were not statistically significant between RFS and IPFS respondents, but Question 3 ‘In this team people are sometimes rejected for being different’ was statistically significant (p < 0.01).

| Question | Mean/SD | p-value |

|---|---|---|

| 1. When someone makes a mistake in this team, it is often held against him or her. | RFS; M=1.77, SD= .49 IPFS; M=1.84, SD=.46 |

p = 0.237 |

| 2. In this team, it is easy to discuss difficult issues and problems. | RFS; M=1.72, SD=.54 IPFS; M=1.73; SD=.63 |

p = 0.527 |

| 3. In this team, people are sometimes rejected for being different. | RFS; M=1.85, SD=.44 IPFS; M=1.98, SD=.22 |

p = 0.007* |

| 4. It is completely safe to take a risk on this team. | RFS; M=1.50, SD=.59 IPFS; M=1.48, SD=.65 |

p = 0.970 |

| 5. It is difficult to ask other members of this team for help. | RFS; M=1.67, SD=.66 IPFS, M=1.77, SD=.62 |

p = 0.250 |

| 6. Members of this team value and respect each others’ contributions. | RFS; M= 1.85, SD=.40 IPFS M=1.87, SD= .49 |

p = 0.266 |

* Statistically significant (p < 0.05), SD – standard deviation

Furthermore, for participants in both IPFS and RFS arms, there was a statistically significant difference in the abbreviated Edmondson Psychological Safety instrument between pooled total pre-scores and the pooled total post-scores (IPFS: p < 0.01, VD.A = 0.66; RFS: p < 0.01, VD.A = 0.64). Both arms experienced a statistically significant increase in psychological safety rating that, as noted previously, did not differ between groups at either time point. Based on our research question, we can conclude that RFS is comparable to IPFS in maintaining and establishing psychological safety.

Secondary outcomes compared RFS and IPFS on self-reported measure of psychological safety based on the complete Edmondson Psychological Safety instrument for site champions. Self-reported scores on the instrument for the site champions exhibited adequate internal consistency reliability as measured by Cronbach’s coefficient alpha (Pre-intervention α = 0.83; Post-intervention α = 0.87), which was consistent with the findings in Edmonson [19].

A signed-rank test using the Pratt method to handle zero differences revealed that there was a statistically significant increase in psychological safety from the pre-test scores (Mean = 30.73, SD = 5.33) to post-test scores (Mean = 34.64, SD = 5.90) on the complete Edmondson Psychological Safety instrument and the matched-pairs rank biserial correlation coefficient indicated that this effect was large in magnitude (p = 0.03, rrb = 0.69). Therefore, both RFS and IPFS showed an improvement in participant psychology safety following the intervention across all constructs in the complete Edmondson Psychology Safety instrument.

Psychological safety can be established and maintained with RFS. Furthermore, RFS is equivalent to IPFS in improving psychological safety among rural and remote interdisciplinary teams [33]. Of note, Question 3 ‘In this team, people are sometimes rejected for being different’ was the only question in the abbreviated Edmondson Psychological Safety instrument that was statistically different between RFS and IPFS groups with the RFS group responding more negatively to question 3. Overall pooled scores did not reveal statistically significant differences between groups on the total psychological safety rating. This particular question may reflect the underlying difference between the RFS groups being more remote healthcare teams with higher reliance on transient healthcare providers compared with IPFS groups being more stable rural healthcare teams [34]. Another possible explanation is that RFS does not foster the development of the team culture to the same degree as IPFS does. However, this finding was not powerful enough to make a difference in the pooled analysis of the total score on the abbreviated Edmondson Psychological Safety instrument.

RFS falls within the growing field of telesimulation which could benefit from standardized systematic methodology for successful implementation [14,35,36]. The adoption of telesimulation is rapidly growing due to recent pressures for distance learning from the pandemic [1,9,16,37,38]. Despite the finding of potential equivalence between IPFS and RFS, this study does not claim psychological safety of all different types of telesimulation as our study only describes one type of telesimulation (RFS).

This study supports the use of virtual facilitation in SBE by establishing potential equivalence of psychological safety with virtual facilitation compared with traditional in-person facilitation. While the reliability of self-reported survey data is often questioned due to its subjective nature, psychological safety is inherently subjective and self-defined by the participant. Therefore, this study benefits from the ability to match a subjective tool with subjective outcome measures.

This intervention results from the combination of recently available technology and immediate need for remote learning [1,9]. Future applications of RFS could be extended beyond rural and remote hospital teams to include intensive care, acute care, obstetrical, operating room, inpatient ward, public health, addictions and mental health, and homecare teams. The RFS process and methodology, although initially developed out of necessity for low-resource hospitals, could be applied to high-resource hospitals in the interest of increased efficiency and cost-effectiveness [26]. Further research questions that explore the cost–benefit analysis comparing RFS with IPFS would be beneficial to quantify the suspected cost reduction of transitioning from IPFS to RFS. A more robust follow-up study would include complete randomization of sites into either IPFS or RFS with a matched-pairs design for pre- and post-surveys at the level of the individual participant. It is reasonable to suggest that improved psychological safety of healthcare providers while learning in simulation would ultimately improve patient care; however, this trial is unable to make this conclusion.

Recent adoption of telesimulation has generated many questions regarding quality, feasibility, implementation strategies and outcomes [3]. With the need to support the current or other potential pandemics, virtual or remote facilitation has seen a significant surge in activity across different educational contexts including undergraduate, continuing medical and interprofessional education programs. For example, the COVID-19 pandemic led to the development of novel approaches to remote undergraduate education, which involved moving traditional in-person simulation to online environments [39,40].

The literature identifies a key feature of successful distance education as having facilitators who are skilled in facilitation and technology management [36,41]. Another key feature of virtual facilitation is the facilitator’s ability to create a safe container for understanding through the facilitator’s presence, authenticity and trust within the group [37,42,43]. Some have found teledebriefing to be inferior to in-person debriefing yet acknowledge that teledebriefing provides a practical alternative to in-person experiences [44]. This study scaffolds on the existing literature by identifying the importance of delineating the additional roles needed for successful virtual facilitation and virtual debriefing, by clarifying learner expectations through proactive needs assessments and by explicit sharing of learning objectives ahead of time to mitigate previously identified challenges with telesimulation.

While there has been a bourgeoning amount of literature describing telesimulation to support rural medical education in response to the COVID-19 pandemic, we are also seeing a shift in the application of remote facilitation to include urban centres, outreach programs and non-clinical groups [45–48]. For example, the INACSL has recognized the need for best practice standards in facilitated virtual synchronous debriefing [18]. There has also been increasing interest in the area of psychological safety in SBE, specifically within remote debriefing identifying the importance of communities of inquiry which emphasizes social, cognitive and educator presence which are used in combination to establish and maintain psychological safety [16]. The authors identify both the essential explicit and implicit strategies used to establish and maintain psychological safety.

A limitation of this experimental trial is the inability for complete randomization of hospitals due to geographic constraints of the rural and remote environment. This trial was conducted across vast geography where winter road conditions and travel restrictions precluded facilitators from driving to farther hospitals such that smaller remote hospitals were disproportionately enrolled in the RFS arm. Trial findings may reflect a difference in geography and simulation experience and not the facilitation method. This limitation was mitigated by enrolment of several geographically closer sites in the RFS arm during the peak of the pandemic when in-person learning events were not possible. This limitation of convenience sampling may explain the differences between IPFS and RFS participant and site champion baseline demographics with the IPFS arm having slightly more simulation experience and being comprised of more trainees when compared with the RFS teams (Table 1).

Another limitation of this trial is the variability between facilitators who may have different facilitation styles. We attempted to mitigate this limitation by selecting experienced facilitators with similar practice backgrounds (rural family physicians and rural nurses) who have all undergone the same standardized training in facilitation and psychological safety; however, inter-facilitator differences are unavoidable. Of the team of seven facilitators, four facilitated both IPFS and RFS sessions, while one facilitated only IPFS, and two facilitated only RFS.

A further limitation of this trial is that the validated Edmondson Psychological Safety instrument was abbreviated for expeditious participant data collection and to increase survey completion rates which may have impacted the validity and reliability of the abbreviated instrument. There have been no known valid and reliable measurement tools developed to measure psychological safety for RFS. Future studies should consider the psychometric testing of these instruments in advancing this burgeoning area of new inquiry in RFS.

However, the abbreviated Edmonson Psychological Safety instrument construct of team learning climate demonstrates internal validity through improvement of the outcome measure following the trial intervention. Additionally, to mitigate this limitation, the validated complete Edmondson Psychological Safety instrument was administered to a smaller sample of the site champions (n = 11) who had more time for survey completion. The data from the validated instrument were analyzed as a secondary outcome and supported our findings from the abbreviated Edmondson Psychological Safety instrument. This finding was interpreted cautiously as the site champions from both RFS and IPFS arms are not directly involved in participating in the simulation, representing a single perspective of hospital culture from a local leader or educator who may be motivated to represent the hospital in a positive light, which may bias their perspective.

Another limitation of the study’s design was that, due to resource limitations, the facilitators did not assign individual participant codes to each participant, limiting the ability to match samples of pre and post data for both RFS and IPFS sessions. Consequently, researchers were unable to match pre and post data at the individual participant level, which is required to conduct a more powerful paired samples statistical analysis.

Our study advances this existing understanding of psychological safety in telesimulation by providing empirical evidence through an in situ experimental trial demonstrating that psychological safety can be achieved and maintained in a remotely facilitated simulation environment. Mentoring rural and remote simulation champions in a psychologically safe telesimulation modality such as RFS can have a ripple effect, building local capacity for accessible and timely medical education to frontline staff.

As the field of telesimulation grows within SBE, there will be a need to establish best practice standards [10,18]. Telesimulation will likely become another educational approach for SBE. The ability to deliver training in resource-limited environments, assess learners remotely and overcome geographical obstacles that offset resource utilization and are available outside of major academic medical centres have been identified as an even greater post-pandemic need [12,40]. The authors encourage simulation faculty to use the RFS methodology described herein as a supplemental educational tool through which they can apply existing in-person simulation facilitation principles to reach teams that were previously precluded from SBE due to lack of access, while also exploring urban applications.

The authors thank Drs. Gavin Parker and Sheena CarlLee for their comments and insights.

SR, KS, MJ, ADM, NT, SW, and TC collected data; SR, SR, and AK analyzed the data; all authors contributed to the experimental design and writing of the manuscript.

This research was funded by the Rural Health Professions Action Plan (RhPAP) of Alberta and in-kind funding through the Alberta Health Services Provincial Simulation Program (eSIM).

The data analysis is available upon request.

This research protocol was approved by the Health Research Ethics Board of Alberta (HREBA) – Community Health Committee (CHC) (ethics identification HREBA.CHC-20-0057).

None declared.

1.

2.

3.

4.

5.

6.

7.

8.

9.

10.

11.

12.

13.

14.

15.

16.

17.

18.

19.

20.

21.

22.

23.

24.

25.

26.

27.

28.

29.

30.

31.

32.

33.

34.

35.

36.

37.

38.

39.

40.

41.

42.

43.

44.

45.

46.

47.

48.

| Questions | Answers |

|---|---|

| When someone makes a mistake in this team, it is often held against him or her. | Never / Sometimes / Always |

| In this team, it is easy to discuss difficult issues and problems. | Never / Sometimes / Always |

| In this team, people are sometimes rejected for being different. | Never / Sometimes / Always |

| It is completely safe to take a risk on this team. | Never / Sometimes / Always |

| It is difficult to ask other members of this team for help. | Never / Sometimes / Always |

| Members of this team value and respect each others' contributions. | Never / Sometimes / Always |

| Questions | Answers |

|---|---|

| When someone makes a mistake in this team, it is often held against him or her. | Never / Sometimes / Always |

| In this team, it is easy to discuss difficult issues and problems. | Never / Sometimes / Always |

| In this team, people are sometimes rejected for being different. | Never / Sometimes / Always |

| It is completely safe to take a risk on this team. | Never / Sometimes / Always |

| It is difficult to ask other members of this team for help. | Never / Sometimes / Always |

| Members of this team value and respect each others’ contributions. | Never / Sometimes / Always |

| Problems and errors in this team are always communicated to the appropriate people (whether team members or others) so that action can be taken. | Never / Sometimes / Always |

| We often take time to figure out ways to improve our team’s work processes. | Never / Sometimes / Always |

| In this team, people talk about mistakes and ways to prevent and learn from them. | Never / Sometimes / Always |

| This team tends to handle conflicts and differences of opinion privately or off-line, rather than addressing them directly as a group. | Never / Sometimes / Always |

| This team frequently obtains new information that leads us to make important changes in our plans or work processes. | Never / Sometimes / Always |

| Members of this team often raise concerns they have about team plans or decisions. | Never / Sometimes / Always |

| This team constantly encounters unexpected hurdles and gets stuck. | Never / Sometimes / Always |

| We try to discover assumptions or basic beliefs about issues under discussion. | Never / Sometimes / Always |

| People in this team frequently coordinate with other teams to meet organization objectives. | Never / Sometimes / Always |

| People in this team cooperate effectively with other teams or shifts to meet corporate objectives or satisfy customer needs. | Never / Sometimes / Always |

| This team is not very good at keeping everyone informed who needs to buy in to what the team is planning and accomplishing. | Never / Sometimes / Always |

| This team goes out and gets all the information it possibly can from a lot of different sources. | Never / Sometimes / Always |

| We don’t have time to communicate information about our team’s work to others outside the team. | Never / Sometimes / Always |

| We invite people from outside the team to present information or have discussions with us. | Never / Sometimes / Always |

| Members of this team help others understand their special areas of expertise. | Never / Sometimes / Always |

| Working with this team, I have gained a significant understanding of other areas of expertise. | Never / Sometimes / Always |

| The outcomes or products of our work include new processes or procedures. | Never / Sometimes / Always |